While 90% of enterprises are actively adopting AI agents, and 79% of enterprises expect full-scale adoption of agentic AI in the next 3 years, there's no single "right" way to scale AI across an organization. What matters more is finding the model that fits how your company actually operates.

Accelerating the time from idea to production-level AI agent that delivers real value comes down to a few key questions:

Who decides what gets built?

Who defines standards?

Who builds and maintains agents?

Who uses them, and improves them over time?

If you've read Frederic Laloux's Reinventing Organizations, the parallel is hard to miss. Just as Laloux maps how companies distribute authority—from top-down hierarchies to self-managing "Teal" organizations—AI adoption follows the same tension. How much autonomy do you give teams? How much do you centralize? There's no neutral answer. It reflects what your organization already believes about trust, control, and who's allowed to build.

After working with over 150 enterprises, and deploying thousands of AI agents, we've seen four models consistently emerge. All of them work, but each comes with trade-offs—and most companies move through them over time.

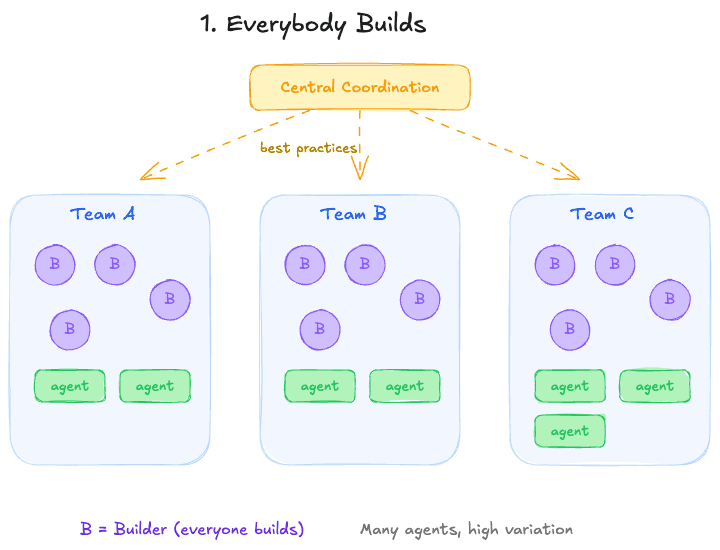

Model 1: Everybody Builds

Under this model, everyone is trained and enabled to build their own agents. A central lead (or small group) shares best practices, runs trainings, and promotes reuse.

Day-to-Day Reality:

Teams build and iterate independently

Fast feedback loops

High ownership

Prioritization happens within each team. There's no dependency on a central function to get started.

Watch Out For:

Duplicated work, inconsistent quality, and agents that quietly break when the person who built them moves on. Some lightweight coordination is essential to make this model sustainable. Mitigate this with a shared registry of agents (so teams can see what already exists), lightweight templates or standards for how agents are built, and a regular cadence—even monthly—where teams share what they've built and learn from each other.

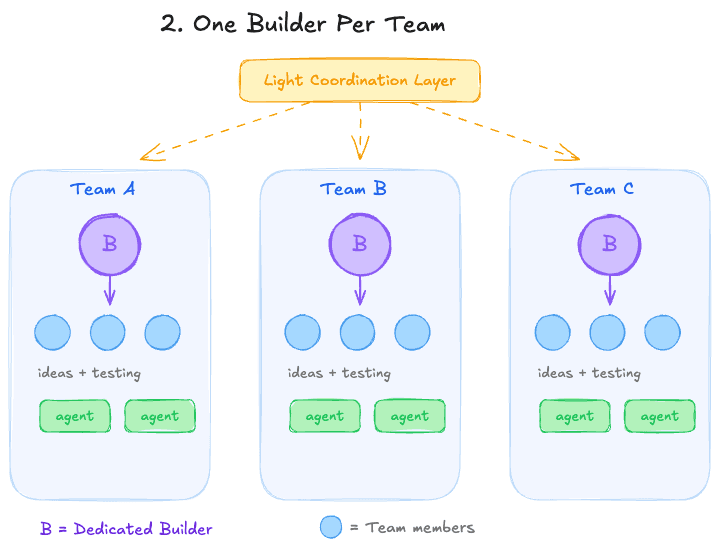

Model 2: One Builder Per Team

Each team has a dedicated "AI lead" or power user who builds most agents. The rest of the team contributes ideas, testing, and refinements.

Sometimes there's also a light central coordination layer across teams.

Day-to-Day Reality:

Clear ownership within each team

Faster execution than centralized models

More consistency than fully distributed setups

Teams can still move quickly, but with more structure and quality control.

Watch Out For:

Everything depends on the builder. If they leave, get overloaded, or become a bottleneck, the team stalls. Knowledge sharing between builders matters more than most companies expect. Mitigate this by pairing builders across teams so knowledge isn't siloed, documenting how agents work (not just that they exist), and making sure at least one other person per team can maintain what's been built.

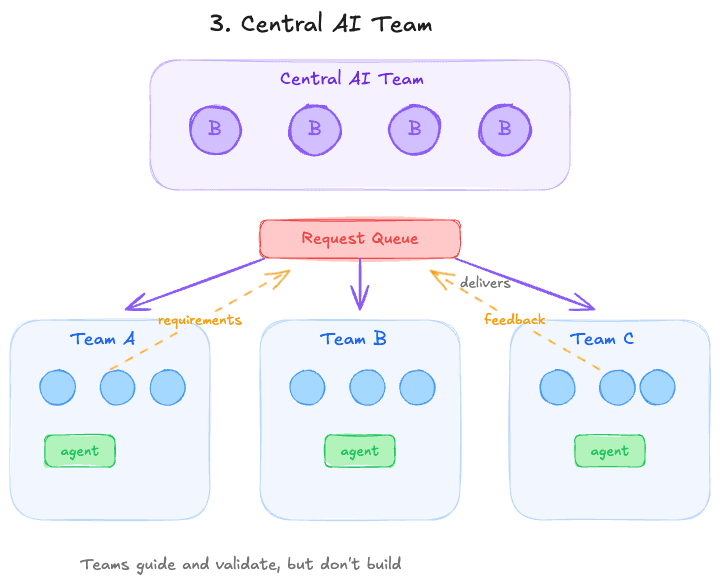

Model 3: Central AI Team

An external team designs, builds, and often maintains agents end-to-end. Internal teams act primarily as users, with limited input on requirements and testing.

Day-to-Day Reality:

Centralized development

Slower iteration cycles

Higher control and standardization

Teams don't build directly—they guide and validate.

Watch Out For:

The central team can become a bottleneck. Over time, the gap between what business teams request and what they actually need tends to widen. Mitigate this by embedding a central team member in each business team on a rotating basis, keeping request-to-delivery cycles short (weeks, not quarters), and giving business teams visibility into the backlog so they can prioritize together rather than just submit tickets into a void.

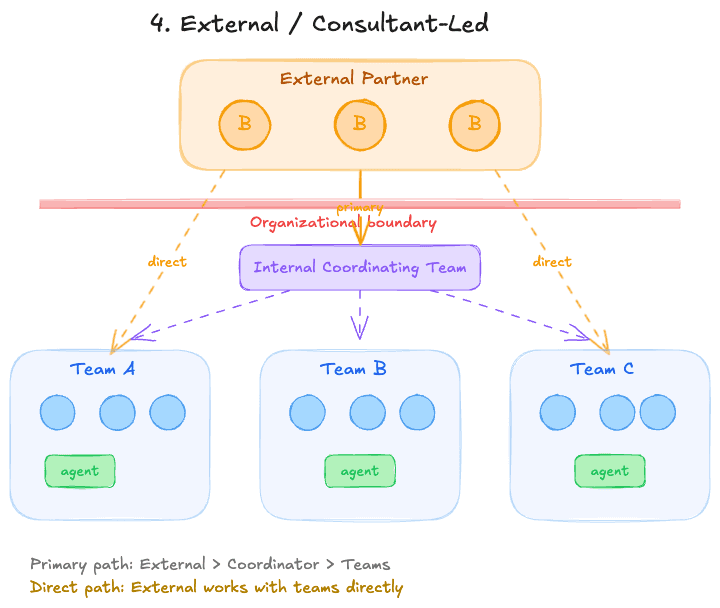

Model 4: External / Consultant-Led

An external team designs, builds, and often maintains agents end-to-end. Internal teams act primarily as users, with limited input on requirements and testing.

Day-to-Day Reality:

Fast initial delivery

Low internal capability building

Dependency on external partner for changes

Watch Out For:

If this stays the long-term model, the organization never develops its own AI muscle. Can work well as a bridge while you build internal capability. Mitigate this by structuring the engagement as a knowledge transfer from the start: require the external partner to co-build with internal staff, document everything they deliver, and set a clear timeline for when ownership transitions in-house.

Quick Comparison Table

Model | Speed | Control | Scalability | Ownership | Typical Risk |

Everybody Builds | High | Low | Medium | Very High | Fragmentation, duplication |

One Builder per Team | Medium-High | Medium | High | High | Bottlenecks at builder level |

Central AI Team | Low-Medium | High | Medium | Low | Slow delivery, disconnect from teams |

External / Consultant | Medium | Medium | Low | Very Low | Dependency, low internal capability |

The Real Trade-Off

This isn't about choosing the "best" model.

Every model sits somewhere across two fundamental tensions:

Autonomy vs. Control — More autonomy means faster iteration and higher ownership. More centralization means tighter standards and easier governance.

Speed vs. Capability Building — External teams deliver fast but leave you dependent. Internal models are slower to start but compound over time.

The right answer depends on your structure, culture, and how ready your teams are to build. The wrong answer is assuming you'll never need to change it.

Want to see how StackAI helps every enterprise team go from idea to production-ready AI agent? Get a demo with our AI experts.